Business risks lurk in many places. For cybersecurity, the worst risks are often the ones you never saw coming.

A Real World Example

To illustrate, consider this real example: A manufacturing conglomerate has an engineer build a Javascript connector for remote access to a mainframe but inadvertently exposes it to the internet. How do you discover this risk and its potential damage? A penetration test will not help unless you happen to be testing that particular machine among hundreds or thousands of servers. A vulnerability scan also will not help, as the risk will be invisible because it is not among the Common Vulnerabilities and Exposures(CVEs). For most organizations relying on older tools, a risk such as this one is an open door to attackers.

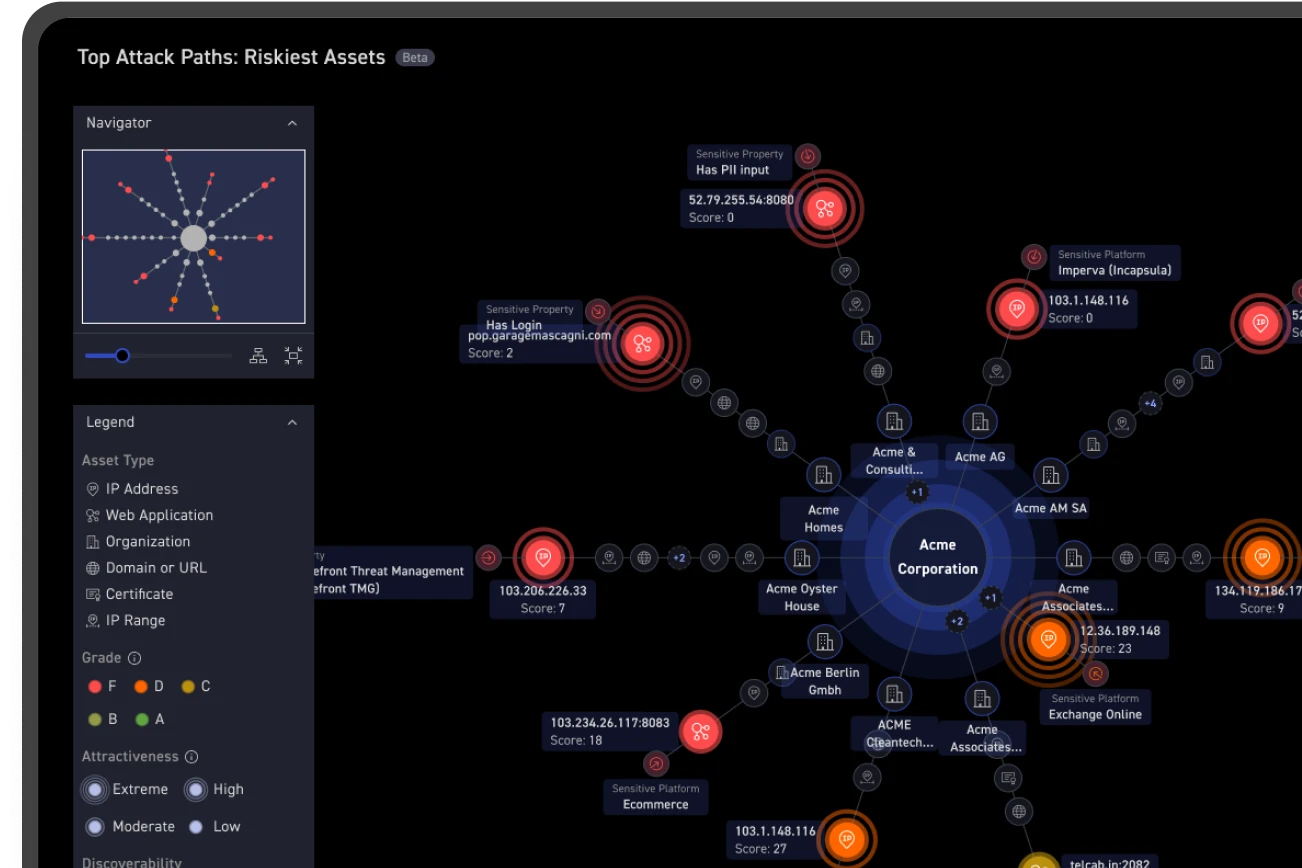

The attack surface protection principle of discovery helps you detect the existence of unknown and potentially vulnerable assets — the soft spots in the extended attack surface that are susceptible to a breach (like the mainframe above). This article describes the attack surface protection principle of assessment, which guides an organization's tactics for testing the extended attack surface to determine the breach potential and the associated impact of an attack.

Security Testing is the Anchor of Risk Assessment

Cyber risk assessment is a fundamental principle of attack surface protection. Organizations may assess risk in several ways to discover vulnerabilities, identify threats and achieve compliance. Standard tactics may include application security tests, vulnerability assessment scans, penetration tests, or Red Team tests.

Most large enterprises probably do all of the above, perhaps more. Despite these efforts, accidental exposures like the mainframe example above are common, and public notices of breaches continue at a brisk pace. Let's take a closer look at how the use of these standard assessment processes can fall short of protecting the extended attack surface.

Scope of Testing

The scope of testing relates to how much of your extended attack surface is "enough" to test in order to identify all the exposures that could result in a breach or — as recent research we commissioned and Informa Tech conducted shows — before you exceed your testing budget. Typically, a large enterprise will approach scoping as it does with compliance, such as auditing requirements for the PCI Data Security Standard (PCI DSS). For PCI DSS, audits focus on a particular area of risk: the payment cardholder data environment. Sampling, or testing a subset of all IT assets, is permitted when there are large numbers of system components. A sample must be statistically large enough to satisfy auditors that controls are implemented as expected.

Sampling doesn't equal 100% security coverage

Sampling is an administrative convenience or a budgetary necessity. While it may provide official approval of security efforts (i.e., compliance), let's be clear that sampling does not actually test every asset in the attack surface, so there is no conclusive proof or even an implication of 100% security coverage.

There's another tricky wrinkle. Sampling assumes that your security team has a clear view of 100% of all assets in the extended attack surface. The Informa Tech research shows that the majority of organizations pen test 50% or less of their attack surface. Most pen tests barely scrape the attack surface; like an iceberg, the biggest risks are unseen.

An organization may retort that it uses a risk-based approach and is willing to assume X amount of financial risk for not testing everything. With the scenarios described above, it is hard to see how a CFO could feel comfortable with a security assessment based on so much missing data. An attacker does not care if an enterprise is statistically secure. Attackers are all about leveraging the path of least resistance — usually the unprotected assets. All they need is the right set of exploitable vulnerabilities, and they are in your systems.

Frequency of Testing

The frequency of security tests too often mimics the bare minimum requirements of compliance regimes. In the case of pen testing, the Informa Tech research shows that 72% of organizations test quarterly or less frequently and that only 22% test monthly or more frequently. Is this enough to be sure?

Before you answer that, consider the attacker's perspective. It is all about finding the path of least resistance to a breach. Thousands of attackers continuously scan and test vast, random IP ranges in addition to the assets of large companies. Attackers seek easy exploit opportunities such as where it took a company too long to patch a known vulnerability or fix a misconfigured cloud asset. How often does a big enterprise's technology change? Is your organization's testing frequency matched to this degree of change? Most organizations probably have room for improvement in this area.

Manual or Automated – Is There Really a Choice?

Given the painful reality that testing for complex attack vectors often entails expensive, highly manual processes, it is not surprising for an enterprise to take the easier path of statistical compliance. This can be a deadly trap. Consider again our unfortunate manufacturing conglomerate above and the fact that many risks cannot be found with a CVE-focused scanner. According to research from Gartner, Inc., 99% of public cloud breaches are the fault of the customer who misconfigures controls for access and security. The analysts predict this problem will persist through 2025.

Attackers can combine and leverage other hard-to-find risks to breach your organization's data. Examples include accidental exposure of sensitive data, authentication and encryption weaknesses, misconfigured applications or assets, network architecture flaws and other risks.

As you assess your organization's capabilities for addressing these issues, please keep in mind that legacy security testing schemes have big obstacles with frequency and scope. Going forward, I urge you to explore more cost-effective options for better automation that can address 100% of your extended attack surface. To help you achieve this quest, our next article will address the third principle of attack surface protection — Prioritize: identifying which exposures pose material threats to your enterprise so you can optimize remediation.