What Is Attack Surface Discovery?

Attack surface discovery is the systematic, often automated process of identifying, mapping, and inventorying all internet-facing digital assets, such as servers, cloud resources, APIs, and forgotten “shadow IT”, to uncover potential, exploitable vulnerabilities. It acts as the first step of External Attack Surface Management (EASM), providing a real-time, attacker’s-eye view to proactively secure, reduce, and manage an organization’s digital exposure.

The goal of attack surface discovery is to provide security teams with a comprehensive, real-time view of all internet-facing assets, which comprise an organization’s attack surface. This is critical for understanding exposure and minimizing the risk of unauthorized access.

As organizations adopt cloud infrastructure and decentralized development practices, assets can be deployed rapidly and sometimes without full security oversight. Attack surface discovery helps detect these unknown or forgotten assets before they can be exploited.

Why Attack Surface Discovery Matters

As environments grow more complex, visibility into all external-facing assets becomes essential for managing risk effectively. Here are the key reasons why attack surface management, including discovery, matters:

- Uncovers unknown assets: Helps identify shadow IT, forgotten subdomains, or services deployed without security review.

- Reduces exposure: Minimizes publicly accessible entry points that attackers could exploit by enabling early detection and remediation.

- Improves incident response: Provides accurate asset inventories that speed up investigations and containment during security incidents.

- Enables continuous monitoring: Detects changes in the external environment, such as new services or misconfigurations, in near real time.

- Supports compliance: Helps demonstrate asset visibility and risk management practices required by security frameworks and regulations.

- Prioritizes risk: Allows security teams to focus on high-risk assets by correlating asset exposure with threat intelligence and known vulnerabilities.

Attack Surface Discovery Concepts

Seeded vs. Seedless Discovery

Attack surface discovery can follow either a seeded or seedless approach, each with different implications for coverage and control:

- In seeded discovery, the process starts with known inputs, such as domain names, IP ranges, or cloud account identifiers, provided by the organization. These known “seeds” guide the discovery engine to map related assets. This approach is more controlled and typically yields more accurate, organization-specific results. However, it may miss assets that are unknown or not linked to the initial seeds.

- Seedless discovery does not rely on any predefined input. Instead, it uses techniques like certificate transparency logs, DNS enumeration, web crawling, and passive DNS data to independently uncover assets that appear to be associated with an organization. This method can expose shadow IT and rogue assets the organization is unaware of, but may also produce false positives and require more validation.

Many modern EASM tools combine both approaches to maximize visibility: seeded discovery ensures depth and accuracy, while seedless discovery broadens the net to catch unknowns.

External vs Internal Scope

Attack surface discovery focuses on external scope, meaning assets that are exposed to the public internet and accessible by potential attackers. This includes web applications, public cloud storage, VPN endpoints, and exposed APIs. The goal is to understand what an external threat actor could see and target.

Internal scope refers to assets and services only accessible within the organization’s private network, such as internal databases, intranet applications, or systems behind a firewall. While important for internal security assessments, these are generally out of scope for external attack surface management.

Focusing on the external scope helps prioritize the most immediate and exploitable risks; those that don’t require internal access or insider privileges to attack.

Discovery vs Inventory vs Monitoring

Attack surface management includes discovery, asset inventory, and monitoring. Together, they form a continuous cycle for managing external exposure.

Discovery focuses on finding assets that are exposed to the internet. This process identifies domains, subdomains, IP addresses, cloud services, APIs, and other publicly reachable resources. Discovery answers the question: What assets exist that could be targeted by attackers? It often relies on techniques such as DNS enumeration, certificate log analysis, and internet-wide scanning.

Inventory organizes and maintains a structured record of the assets that have been discovered. This includes details such as ownership, asset type, associated business unit, and known vulnerabilities. Inventory answers the question: What do we know about each asset and who is responsible for it? A reliable inventory helps security teams track assets over time and prioritize remediation.

Monitoring tracks changes in the attack surface after assets have been identified and cataloged. It continuously checks for new assets, configuration changes, expired certificates, or newly exposed services. Monitoring answers the question: What has changed in the environment that might introduce new risk?

In practice, these three functions operate together. Discovery identifies assets, inventory documents them, and monitoring ensures the organization remains aware of changes that could increase exposure. Continuous monitoring is especially important in modern environments where cloud resources and services can appear or disappear quickly.

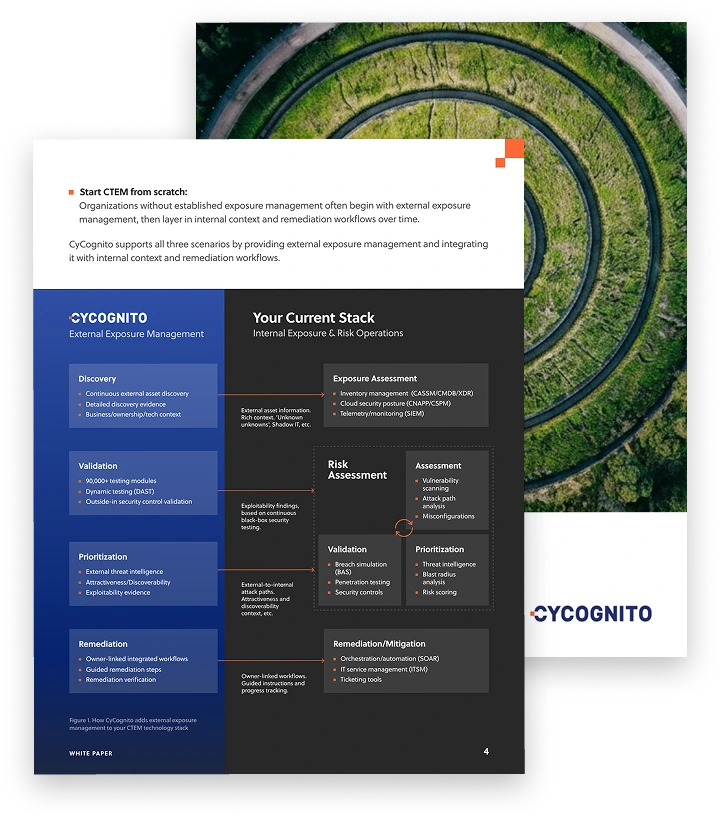

Operationalizing CTEM Through External Exposure Management

CTEM breaks when it turns into vulnerability chasing. Too many issues, weak proof, and constant escalation…

This whitepaper offers a practical starting point for operationalizing CTEM, covering what to measure, where to start, and what “good” looks like across the core steps.

How Attack Surface Discovery Works

1. Asset Identification

Attack surface discovery starts with identifying all internet-facing assets that belong to an organization. This includes known and unknown domains, subdomains, IP addresses, web applications, APIs, and cloud infrastructure. Discovery techniques often combine passive data sources (like certificate transparency logs, DNS records, and WHOIS data) with active scanning to find exposed systems.

Modern tools may also query cloud provider APIs and parse infrastructure-as-code repositories to find assets created outside traditional deployment pipelines. The goal is to build a complete picture of what is publicly accessible, regardless of who or what deployed it.

2. Attack Surface Mapping

Once assets are identified, the next step is to map how they are exposed and interconnected. This includes identifying open ports, running services, exposed APIs, and the relationships between applications, domains, and hosting environments.

Mapping also involves fingerprinting technologies in use (e.g., web servers, CMS platforms, third-party services) and detecting potentially risky configurations like directory listings, weak authentication, or outdated software. This step helps assess which assets present the greatest risk based on their exposure level and value.

3. Vulnerability Assessment

After mapping the attack surface, the system evaluates each asset for known vulnerabilities. This may involve banner grabbing, protocol analysis, version detection, and correlation with vulnerability databases such as CVE or NVD.

Some tools go further by simulating attacker behavior to identify misconfigurations or exploitable weaknesses, such as default credentials, exposed admin panels, or unpatched services. The objective is to understand the technical weaknesses in the discovered assets before an attacker does.

Learn more in our detailed guide to vulnerability assessment

4. Asset Inventory Management

The discovered and assessed assets are stored in a centralized inventory that serves as the source of truth for external exposure. This inventory should include metadata such as asset ownership, business function, deployment environment, and security classification.

Asset inventory management enables tracking over time, showing when assets appear, change, or are decommissioned. It also supports accountability by linking assets to responsible teams or business units, improving response workflows and remediation.

5. Continuous Monitoring

Attack surfaces are dynamic. New assets can appear without notice, and configurations can change frequently. Continuous monitoring ensures that organizations detect these changes in near real time.

This involves regular scanning, alerting on new or modified assets, and tracking asset lifecycle events. Integration with change management systems or CI/CD pipelines can enhance visibility into why changes occurred. Continuous monitoring helps security teams respond quickly and maintain an accurate picture of risk exposure.

Tips from the Expert

Dima Potekhin, CTO and Co-Founder of CyCognito, is an expert in mass-scale data analysis and security. He is an autodidact who has been coding since the age of nine and holds four patents that include processes for large content delivery networks (CDNs) and internet-scale infrastructure.

In my experience, here are tips that can help you better operationalize and optimize your external attack surface management efforts:

- Use recursive discovery to expose nested and indirect assets: Many overlooked assets are only a few hops away from known infrastructure: Linked via redirects, embedded resources, or shared third-party services. Configure your tools to recursively follow asset relationships (e.g., JS includes, iframe targets, CNAMEs).

- Use certificate subject alternative names (SANs) to reveal orphaned services: Certificates often list multiple SAN entries, which can include forgotten or legacy domains. Parsing and pivoting off SANs in CT logs frequently reveals dormant or abandoned assets not otherwise visible in DNS or cloud metadata.

- Exploit differential DNS observation across global resolvers: Malicious actors often stage assets geographically. Use geographically distributed DNS resolvers to detect regionally exposed or geo-fenced subdomains that may not appear in standard lookups but are still live in other regions.

- Fingerprint cloud misattribution through indirect branding: Analyze TLS certificate issuers, favicon hashes, CDN headers, and site metadata to catch assets hosted in third-party environments that still trace back to your organization (e.g., marketing microsites or acquisitions) but aren’t linked via primary domains.

- Integrate IaC (Infrastructure as Code) linting into discovery workflows: IaC templates can reveal intended deployments that may not have surfaced yet. Parsing Terraform or CloudFormation repositories provides early insight into assets soon to appear in your external footprint.

Types of Attack Surface Discovery Applications

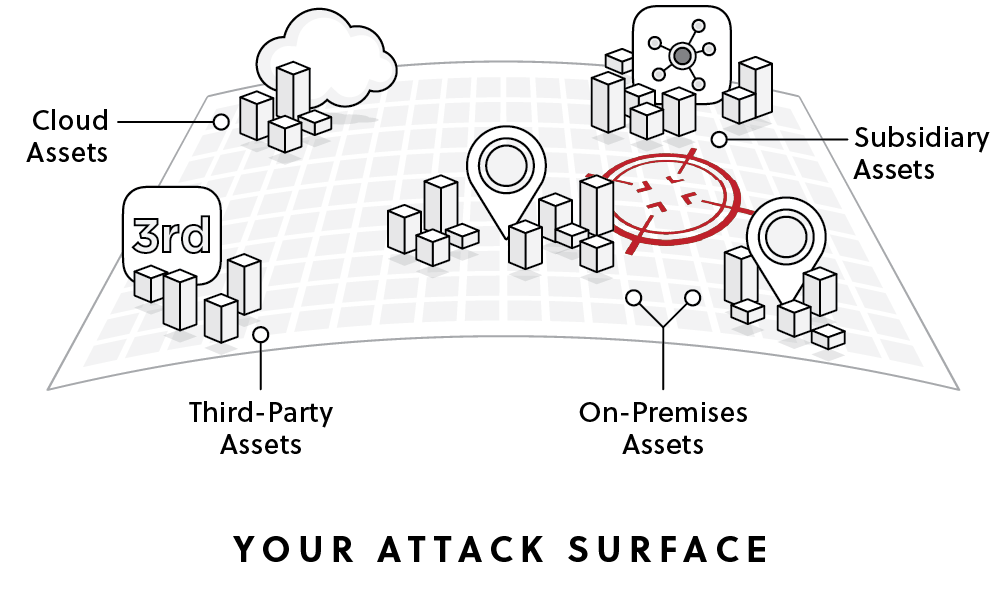

External/Cloud Asset Discovery

External and cloud asset discovery focuses on identifying all assets that are reachable from the public internet. The goal is to map what attackers can see and interact with outside the organization’s internal network. These assets often change quickly due to cloud deployments, DevOps workflows, and third-party integrations.

Key asset categories typically included in external discovery:

- Domains and DNS: Discovery tools enumerate domains, DNS zones, records, and subdomains associated with the organization. This often includes identifying forgotten or legacy subdomains that still resolve publicly.

- IP space and network edge: This includes public IP ranges owned or used by the organization, as well as infrastructure hosted in cloud providers or external hosting platforms. Discovery also identifies exposed network services and edge infrastructure.

- Web applications and APIs: These are internet-facing applications, API endpoints, hosts, paths, and parameters. Mapping these components helps security teams understand how applications are exposed and where input points exist.

- Cloud assets: Discovery includes cloud accounts, storage buckets, load balancers, serverless endpoints, and other publicly accessible cloud resources across providers such as AWS, Azure, or Google Cloud.

- Third-party and vendor-connected assets: Many organizations rely on SaaS platforms, partners, and external vendors that integrate with their infrastructure. Discovery helps identify these connected assets and determine whether they expose additional entry points.

By continuously mapping these categories, external/cloud asset discovery provides a clear view of the organization’s publicly exposed infrastructure and highlights assets that require security review.

Internal Asset Discovery

Internal asset discovery focuses on identifying systems and services that exist within an organization’s private network. These assets are not directly accessible from the public internet but may still present risk if an attacker gains internal access through phishing, compromised credentials, or a breached endpoint.

The goal is to build a complete map of internal infrastructure so security teams understand what systems exist, how they are connected, and where sensitive data may reside. While not considered part of the external attack surface, organizations must have visibility over both internal and external assets.

Key asset categories typically included in internal discovery:

- Internal networks and IP ranges: Discovery tools scan private IP ranges to identify active hosts, network devices, and internal services running across the environment.

- Servers and endpoints: This includes physical servers, virtual machines, employee workstations, and other devices connected to the internal network.

- Internal applications and services: Many organizations run internal web apps, APIs, dashboards, and management tools that are only accessible within the corporate network.

- Identity and directory systems: Systems such as Active Directory, identity providers, and authentication services are critical internal assets that control access to infrastructure and applications.

- Databases and storage systems: Internal discovery also identifies databases, file shares, and storage systems that may contain sensitive business or customer data.

Understanding internal assets helps security teams detect misconfigurations, enforce access controls, and reduce lateral movement opportunities. While external discovery shows what attackers can reach from the internet, internal discovery reveals what they could access after gaining a foothold inside the network.

Shadow IT Detection

Shadow IT refers to systems, applications, or services used within an organization without formal approval or visibility by the security team. Attack surface management tools help detect these assets by correlating internet-facing infrastructure with known inventories and approved deployment sources.

Examples include unregistered domains, rogue cloud instances, personal SaaS tools, or development environments left exposed. Shadow IT detection reduces blind spots and ensures security policies apply across the full environment.

Supply Chain Assets

Supply chain asset discovery focuses on identifying external systems and services operated by partners, vendors, and service providers that interact with an organization’s infrastructure. These assets are not owned or directly controlled by the organization but can still introduce security risk if compromised.

Modern organizations depend on many third-party platforms, such as SaaS tools, managed services, payment processors, and analytics providers. These services often integrate through APIs, shared credentials, webhooks, or embedded scripts. Each integration creates a potential pathway that attackers could exploit.

Key asset categories in supply chain discovery include:

- Third-party SaaS platforms: Applications used by the organization that store or process company data, such as CRM systems, collaboration tools, or analytics services.

- Vendor-hosted applications: Systems hosted by external vendors that are connected to the organization’s infrastructure, such as customer portals, payment platforms, or support systems.

- API integrations: External APIs used for data exchange between systems. These integrations may expose endpoints, authentication tokens, or webhook interfaces.

- Partner infrastructure: Systems operated by business partners that share data or provide services, such as logistics platforms, identity providers, or payment gateways.

- Embedded third-party components: Scripts, libraries, or services embedded in websites and applications, including content delivery networks, advertising platforms, or tracking tools.

Discovering and mapping these assets helps security teams understand where external dependencies exist and how they connect to internal systems. This visibility makes it easier to assess third-party risk and detect potential supply chain attack paths.

Key Capabilities of Effective Attack Surface Discovery Solutions

Continuous Cadence

Effective attack surface management tools operate continuously to scan an organization’s attack surface. External infrastructure changes frequently as organizations deploy new cloud resources, update DNS records, spin up development environments, or integrate new services. A static or infrequent scan quickly becomes outdated and can miss newly exposed assets.

Continuous discovery works by running recurring scans, monitoring passive intelligence sources, and tracking asset lifecycle events. Passive sources may include certificate transparency logs, passive DNS data, internet scan datasets, and domain registration records. These signals can reveal newly issued certificates, newly resolved subdomains, or infrastructure changes before they are formally documented.

A continuous cadence also enables historical tracking. Security teams can see when an asset first appeared, how its configuration changed, and whether previously remediated exposures have resurfaced. This timeline is useful during incident investigations and helps organizations measure how quickly new exposures are detected and resolved.

Attacker’s Perspective

Attack surface discovery should replicate the reconnaissance techniques used by real attackers. Instead of relying solely on internal inventories or privileged data sources, discovery tools analyze publicly available information to determine what an external adversary can observe and interact with. This is known as threat intelligence.

Common attacker-style techniques include DNS enumeration, certificate transparency analysis, reverse IP lookups, web crawling, and scanning for open services. Tools may also collect data from internet-wide scanning projects or passive telemetry sources that record publicly exposed services.

Viewing the environment from an attacker’s perspective helps highlight assets that might otherwise be overlooked. For example, an internal asset inventory might list production systems but omit abandoned development environments or legacy domains. If these systems remain publicly accessible, they can become attractive targets because they often receive less monitoring and patching.

Broad Coverage

Modern organizations operate across many platforms and infrastructure models, which significantly expands the potential attack surface. Effective discovery solutions must cover a wide range of asset types, including traditional data center infrastructure, public cloud environments, SaaS integrations, and externally hosted services.

Coverage typically includes domains, subdomains, public IP addresses, load balancers, cloud storage buckets, APIs, containers, serverless endpoints, and web applications. Discovery tools must also handle multi-cloud environments across providers such as AWS, Azure, and Google Cloud, where assets can be created dynamically through automation pipelines.

Another challenge is organizational sprawl. Large companies often operate through subsidiaries, regional divisions, or acquired brands, each with its own domains and infrastructure. Discovery tools should be able to identify assets associated with these entities by analyzing naming patterns, certificates, and hosting relationships.

Data Enrichment and Context

Raw discovery results, such as a list of IP addresses or domains, provide limited value without additional context. Effective threat intelligence solutions enrich discovered assets with metadata that helps security teams understand what the asset is, how it is used, and who owns it.

Common enrichment data includes geolocation, hosting provider, ASN ownership, associated domains, technology stack, and service fingerprints. For web applications, this may involve identifying frameworks, content management systems, analytics platforms, or third-party scripts embedded in the application.

Contextual data can also include organizational metadata, such as asset owner, business unit, environment type (production, staging, development), and criticality level. Linking assets to responsible teams allows alerts and remediation tasks to be routed to the correct stakeholders.

Risk Validation

Attack surface discovery often identifies thousands of assets, but only a subset represent meaningful security risk. Risk validation helps separate high-priority exposures from low-impact findings.

This process involves evaluating each asset for vulnerabilities, misconfigurations, and exploitable conditions. Techniques may include service fingerprinting, version detection, banner analysis, and correlation with vulnerability databases such as CVE and NVD. For example, if a discovered server exposes an outdated version of a web server with a known exploit, the tool can flag it as a higher-priority issue.

Some solutions perform additional validation by confirming whether a vulnerability is actually exploitable. For instance, they may detect exposed administrative interfaces, unsecured storage buckets, or publicly accessible development dashboards.

Integration and Workflow

Attack surface discovery becomes far more effective when integrated into the organization’s broader security ecosystem. Instead of functioning as a standalone tool, discovery platforms should feed their findings into systems already used by security and operations teams.

Common integrations include security information and event management (SIEM) platforms, vulnerability management systems, ticketing tools such as Jira or ServiceNow, and security orchestration and automation platforms (SOAR). These integrations allow discoveries to trigger automated workflows.

For example, when a new internet-facing asset is discovered, the system might automatically create an inventory entry, assign ownership based on DNS or cloud metadata, and open a ticket for security review. High-risk exposures can trigger alerts in monitoring systems or incident response platforms.

Remediation Insights

Discovery tools should not only identify exposures but also provide actionable guidance for fixing them. Security teams often need clear information about what the issue is, why it matters, and how to remediate it.

Effective remediation insights include detailed descriptions of the exposure, affected assets, potential impact, and recommended mitigation steps. For example, if a storage bucket is publicly accessible, the tool might recommend adjusting access policies, enabling authentication, or restricting network access.

Prioritization mechanisms help teams focus on the most critical issues first. These may combine vulnerability severity scores, exploit availability, exposure level, and business context. Some platforms also provide remediation playbooks or links to documentation for common fixes.

Attack Path Mapping

Attack path mapping analyzes how multiple assets and vulnerabilities could be chained together during a real attack. Instead of evaluating assets in isolation, this capability examines relationships between systems to identify potential routes an attacker could take to reach sensitive resources.

For example, a publicly exposed web application might connect to an internal API, which in turn communicates with a database containing sensitive data. If the web application is vulnerable and the API lacks proper authentication controls, an attacker could exploit the web application to access the internal service.

Attack path mapping helps security teams understand these relationships by modeling dependencies between domains, services, identity systems, and network infrastructure. It highlights scenarios where a seemingly low-risk exposure could lead to a more serious compromise when combined with other weaknesses.

This analysis supports more strategic remediation. Instead of fixing issues individually, teams can prioritize vulnerabilities that enable high-impact attack paths and significantly reduce overall risk.

Best Practices for Attack Surface Discovery

Establish a Continuous Discovery Process

Attack surfaces change constantly as new services are deployed, domains are registered, and infrastructure is modified. A one-time discovery scan quickly becomes outdated. Organizations should implement a continuous discovery process that runs at regular intervals and monitors passive data sources in near real time.

Continuous discovery combines automated scanning with external intelligence sources such as DNS records, certificate transparency logs, and internet scanning datasets. This allows security teams to detect newly exposed assets shortly after they appear. Early visibility reduces the time attackers have to discover and exploit them.

Integrating discovery into CI/CD pipelines and cloud provisioning workflows further improves coverage. When new infrastructure is deployed, it can automatically be added to the discovery process and evaluated for exposure.

Contextual Classification of Assets

Discovered assets should be classified with meaningful context so security teams can understand their purpose and risk level. Classification typically includes asset type, environment (production, staging, development), associated business unit, and data sensitivity.

Context helps distinguish critical systems from low-risk assets. For example, a production authentication service carries significantly more risk than a temporary development environment. Without this context, security teams may treat all findings equally and waste time investigating low-impact exposures.

Effective classification also assigns ownership. Each asset should be linked to a responsible team or system owner. This ensures alerts and remediation tasks are directed to the people who can actually resolve the issue.

Correlate External and Internal Asset Inventories

External discovery provides visibility into internet-facing assets, but it becomes more valuable when correlated with internal asset inventories. Internal systems such as CMDBs, cloud asset databases, and endpoint management tools contain additional context about infrastructure ownership and configuration.

By correlating these datasets, organizations can identify discrepancies. For example, an externally discovered domain may not appear in the official asset inventory, indicating shadow IT or an unmanaged system. Conversely, an internally tracked asset that is unexpectedly exposed externally may represent a misconfiguration.

This correlation helps maintain a single, consistent view of infrastructure. It also improves investigation and remediation workflows because discovered assets can be mapped directly to known systems and responsible teams.

Validate Exploitability via Active Testing

Not every discovered exposure represents a practical security risk. To reduce false positives, organizations should validate whether identified weaknesses are actually exploitable. Active testing techniques help confirm real attack paths and prioritize meaningful findings.

Validation methods may include service fingerprinting, authentication testing, configuration analysis, and controlled vulnerability scanning. For example, a system might appear vulnerable based on its software version, but active testing can confirm whether the vulnerable component is actually accessible.

This step should be performed carefully to avoid disrupting production services. When implemented correctly, exploitability validation helps security teams focus on exposures that attackers could realistically use.

Prioritize for Remediation

Attack surface discovery often generates a large volume of findings. Effective remediation requires prioritization based on risk rather than addressing issues in arbitrary order. Risk prioritization combines several factors, including vulnerability severity, exploit availability, asset criticality, and exposure level.

For example, a critical vulnerability on a publicly exposed authentication system should be addressed before a medium-severity issue on an internal staging server. Combining technical severity with business context helps ensure remediation efforts reduce the most meaningful risk.

Security teams should also track remediation timelines and measure how quickly exposures are resolved. Establishing service-level objectives for fixing high-risk issues helps maintain accountability and improves overall security posture.

Attack Surface Discovery with CyCognito

Most discovery tools start from what you already know — a list of domains, IP ranges, or cloud accounts you provide. CyCognito starts from nothing more than your organization’s name, using seedless, outside-in discovery to uncover the full external footprint the way an attacker would: without prior knowledge of what exists or where it lives.

- Discovers assets across domains, cloud infrastructure, APIs, subsidiaries, shadow IT, and third-party exposure — without seeds, agents, or manual configuration

- Contextualizes every asset to its business owner, environment, and function so findings route to the right people immediately

- Continuously validates exploitability on every discovered asset through always-on active testing — reducing what appears critical from 25% of findings to 0.1% that are confirmed exploitable

- Prioritizes using attacker reachability, business context, and exploit intelligence — not severity scores alone

- Tracks findings from discovery through verified fix, closing the loop between what is found and what is actually resolved

Organizations using CyCognito typically find their attack surface is up to 20x larger than previously inventoried — most of it unknown because it was never in the asset list to begin with.If you want to see CyCognito in action, click here to schedule a 1:1 demo.