When security teams had control

The 1990s was a good time for security teams, when our networks only had a couple points of ingress and egress.

Just like defending a castle, defending an IT environment with only one or two points of entry and exit enables one to spend limited resources on building thick layers of hardened defenses. One could defend a castle with a moat, a drawbridge, murder holes, reinforced doors, stone walls, entrenched archers, and armor-fitted knights to name only a few.

In the early days of computing with singular monolithic data centers and only one or two points of presence on the internet, we could layer firewalls, web application firewalls, obfuscate behind network address translation (NAT), intrusion detection/prevention systems, and if all that failed, we could still fall back on a myriad of host-based defenses like endpoint detection and response, host-based intrusion detection and antivirus.

The days of the castle builders

The 1990s and early 2000s were the days of the castle builders and security spending reflected the layering strategy to a tee. Enter Amazon Web Services Elastic Compute Cloud in 2006, Azure in 2010, and Google Cloud Platform in 2011 with dreams of IaaS. Salesforce, Atlassian and Git debuted to consume our data as SaaS. Partners, subsidiaries and economies of scale demanded direct access to our networks to exchange data.

The castle that hosted our data, infrastructure and operations has crumbled by convenience and necessity; our monolith melted from hardened, tightly controlled, handfuls of internet-exposed assets and ingress/egress points to a broad field with new, complex interrelationships between our offices, legacy data centers, IaaS, SaaS, partners and subsidiaries, without the impenetrable walls of the past.

And then the Internet changed everything

Want to compromise a company’s data in the post-castle world? Why be traditional and attack their data center directly when you can attack a partner and lateral your way in (Target)? Why launch nuanced SQL injection attacks against a hardened perimeter when you can find an unmanaged, abandoned or misconfigured asset to be your beachhead (Novaestrat)? With the ease in which we can spin up workloads and mangle access control lists, can we reasonably expect our attack surface to be in any way relatable to our prior experience, and should we expect our IT staff to be flawless in maintaining and monitoring that surface?

As a former federal Security Operations Center dweller who loved building robust castle-style defenses, I have to give way to the new paradigm and admit the old ways of thinking aren’t compatible with today’s varied IT ecosystem. The ways into our networks and to our data are now legion, differentiated in both scope and means of entry.

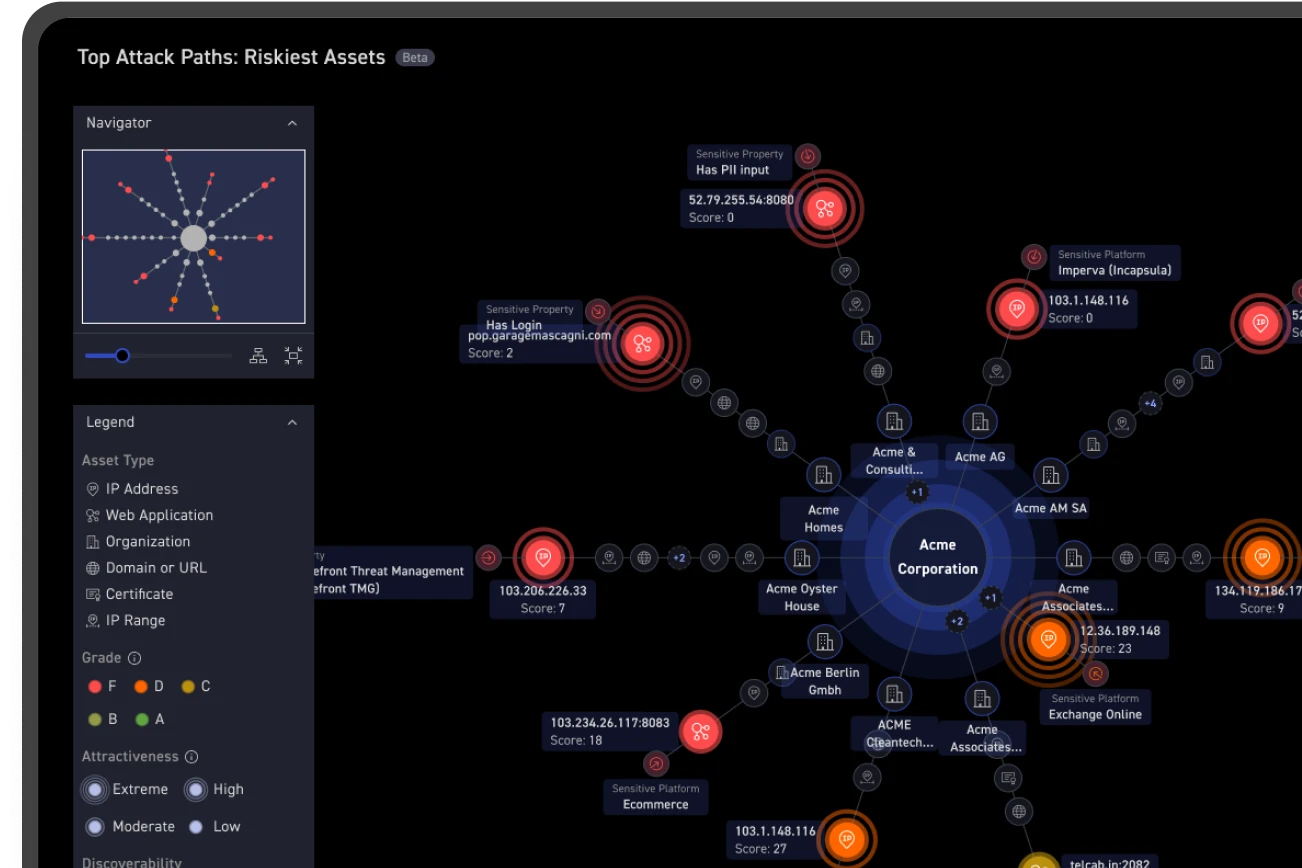

The only effective way of managing risk

Even when we don’t control the infrastructure, we’re responsible for the data (SaaS); even when we control the infrastructure, a single keystroke in an access control list in cloud environments/IaaS can expose our data to the world. The only effective way of managing risk posed by all of these environments and interconnects is to map what’s exposed to adversaries (assets), identify weaknesses (issues), and remediate on a continuous basis. The CyCognito platform was designed for this new paradigm.