Even for organizations with a strong track record of securing traditional applications and infrastructure, AI security can prove deeply challenging.

The reason why is that AI poses a number of unique security challenges and risks – such as the potential for prompt injection, training data poisoning and insecure AI agents, to name a few – that don’t apply to other types of workloads.

Hence the importance of extending traditional cybersecurity strategies to ensure AI security. This explains how organizations should adapt to meet AI security challenges by discussing what AI security means, identifying common AI security risks and describing actionable best practices for protecting AI workloads. Developing explicit security strategies for AI is crucial, including best practices for data governance, managing risks, and integrating AI with existing cybersecurity tools.

Organizations are encouraged to explore AI security solutions while maintaining robust security controls and visibility.

What is AI security?

AI security is the practice of securing AI systems, workloads and infrastructure, such as generative AI applications, and AI and ML applications.

The fundamentals of AI security are the same as those that underpin cybersecurity in general. For example, core AI security practices – like hardening systems against attack, monitoring for AI risks and responding to threats – apply not just to AI security, but also to standard application security. Similarly, just as any application could be subject to software supply chain vulnerabilities, AI systems may be impacted by risks stemming from third-party code or modules.

However, as noted above, AI presents some unique challenges, which require a special approach to AI threat management. Organizations are increasingly adopting security frameworks specifically designed for AI, which provide structured guidelines to address potential risks.

This is why it’s important to treat AI security as an ongoing process, integrating AI into existing security frameworks and processes to enhance the overall security posture.

Why does AI security matter?

AI security is important for the typical organization because AI adoption is surging, with more than 90% of organizations having adopted AI applications or tools. Given that conventional cybersecurity technologies can’t address all AI-related risks, investing in AI security protections is critical for organizations that are implementing AI solutions.

Common AI security risks

Part of the challenge of AI security is that there are many types of risks that can impact AI workloads and infrastructure. Here’s a look at the most common.

Prompt injection

Prompt injection occurs when attackers input malicious prompts into an AI model with the goal of circumventing security controls. The prompts are designed to “trick” the model into doing things it shouldn’t — such as revealing sensitive data.

Learn more in our detailed guide to Prompt Injection.

Model poisoning

Model poisoning attacks manipulate an AI model’s training data. Using this approach, attackers can influence model output. A real-world example of model poisoning was reported in 2025, when it was found that pro-Russia activists had deployed content designed to influence how AI chatbots discuss the Russia-Ukraine war.

Learn more in our detailed guide to LLM Security.

RAG poisoning

Attackers can also poison data that AI models use during the process known as retrieval-augmented generation (RAG), which allows them to access supplemental information (such as a company’s internal databases) that was not included in their original training data. This type of AI attack is known as RAG poisoning.

Extraction attacks

The goal of extraction attacks is to create an unauthorized clone of an AI model. To carry them out, attackers input a large number of requests to an AI model, analyze the outputs, and create a new model that simulates the same responses.

While extraction attacks don’t directly impact the behavior of an organization’s AI systems, they can lead to intellectual property breaches because they allow attackers, in effect, to create copies of proprietary models without permission.

Shadow AI

Shadow AI is the use of unauthorized AI systems or tools. It can create risks for businesses if employees feed sensitive data into third-party AI solutions without security or data privacy guardrails in place.

Learn more in our detailed guide to Shadow AI.

Insecure agents

AI agents – special software programs capable of carrying out actions autonomously based on guidance from AI models – can be insecure if the code running inside them contains vulnerabilities. Since many agents rely on third-party modules, libraries and other resources, software supply chain vulnerabilities can may make agents vulnerable to attack.

Learn more in our detailed guide to AI Agent Security.

MCP servers

MCP (Model Context Protocol) servers act as bridges between AI models and external tools, data sources, and services. Because they manage privileged operations, such as executing commands, retrieving sensitive data, and connecting to third-party APIs, they introduce serious risk if not properly secured.

Common threats include prompt injection (see above) through tool inputs, supply chain attacks on MCP server dependencies, unauthorized command execution, and tool poisoning, where attackers tamper with tool metadata to manipulate model behavior.

Learn more in our detailed guide to MCP Security.

Insecure agent-to-agent and agent-to-model communication

The communication channels that AI agents use to exchange data with each other, as well as with AI models, can become vectors for data leakage. This occurs if attackers are able to intercept unencrypted data flowing between agents and models. There is even a potential for threat actors to manipulate AI agent behavior by modifying requests and responses flowing over these channels.

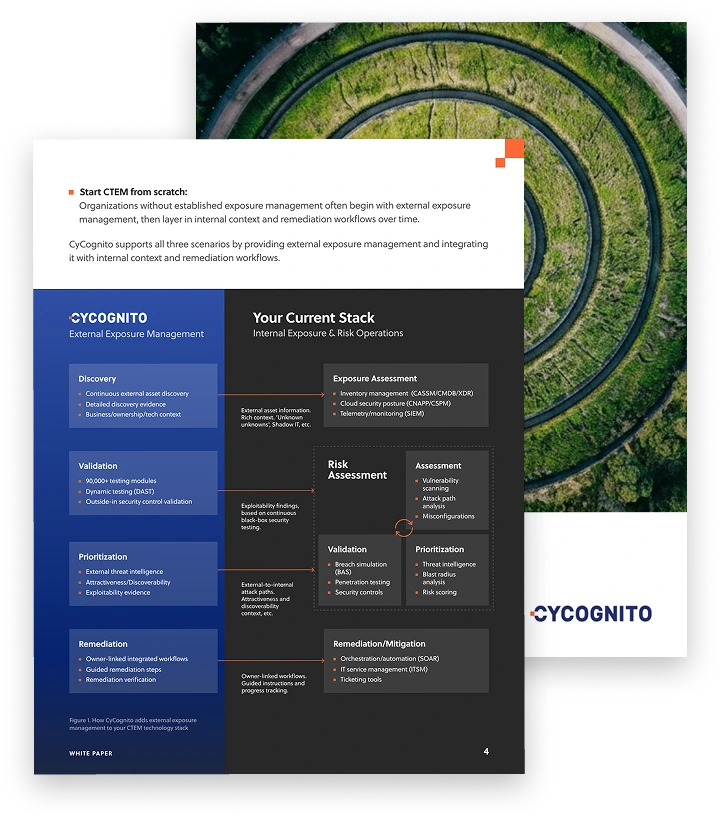

Operationalizing CTEM Through External Exposure Management

CTEM breaks when it turns into vulnerability chasing. Too many issues, weak proof, and constant escalation…

This whitepaper offers a practical starting point for operationalizing CTEM, covering what to measure, where to start, and what “good” looks like across the core steps.

AI security tools and technologies

When modern enterprise AI solutions first appeared on the scene in the early 2020s, few security tools were available to manage threats unique to AI systems.

This has begun to change, however, as specialized AI security solutions become increasingly available. They protect AI systems, AI models, and sensitive data from a wide range of security risks unique to AI environments by monitoring model input and output, as well as tracking the behavior or AI agents.

Leveraging the power of machine learning and artificial intelligence, modern AI security tools offer real-time threat detection and automated response capabilities, helping organizations stay ahead of evolving threats.

Specialized AI security tools, such as advanced threat detection platforms and security information and event management (SIEM) systems, play a critical role in identifying and mitigating AI security risks before they can lead to data breaches or compromise sensitive data. These tools continuously monitor AI systems for suspicious activity, unauthorized access, and potential vulnerabilities, ensuring that both AI models and the data they process remain secure.

OWASP AI security guidance

AI security standards and guidance have also matured in recent years. The most prominent example is the AI security recommendations from OWASP, a nonprofit dedicated to software security. OWASP has published “top 10” lists of security threats that impact large language models, as well as agentic AI applications.

Adhering to OWASP’s guidance won’t guarantee that your business is protected against AI security risks, since they are generic recommendations that don’t take into account the unique requirements of individual organizations. Nonetheless, they’re an excellent starting point for devising an AI security strategy.

Tips from the Expert

Rob Gurzeev, CEO and Co-Founder of CyCognito, has led the development of offensive security solutions for both the private sector and intelligence agencies.

The following AI security tips draw on my real-world experience helping organizations protect AI workloads and infrastructure:

- Continuously discover and inventory exposed AI services: AI model APIs, inference endpoints, MCP servers, and agent orchestration layers are internet-facing assets that carry real exploitability risk. If they are not part of your exposure management strategy, they are part of your blind spot.

- Choose AI models and vendors carefully: When selecting AI solutions, evaluate their security architectures. Consider as well whether AI vendors off any guarantees related to data privacy and security.

- Know and secure your data: AI systems are only as secure as the data organizations feed into them. Strong data security and governance standards are essential for driving AI security.

- Leverage AI to help detect AI risks: AI models can help automate tasks like validating prompt input and output to assess whether it contains risky information. This approach makes it possible to scale AI security using fully automated controls.

- Continuously evolve your AI security tool set: As AI technology changes rapidly, the security solutions that sufficed even just a few months ago may not be good enough today. It’s essential to keep security tools up-to-date in the face of emerging AI security threats.

- Update SOC processes: Securing AI requires not just the right tools, but also effective processes. Hence the need to evolve Security Operations Center (SOC) workflows to incorporate AI risk detection and mitigation.

AI Security vs. Application Security and Cloud Security

Securing AI systems doesn’t require a total overhaul of cybersecurity tools and processes. Instead, businesses can start with the foundations they already have in place to manage application and cloud security risks, then build up from there.

Traditional AppSec and cloud security solutions can help manage some of the risks that impact AI. For example, software supply chain scanners can identify vulnerabilities in AI agent code, and SIEM tools can assist in detecting anomalous activity within AI systems.

That said, other AI security challenges exist that conventional security practices don’t address. For instance, AppSec and cloud security tools won’t detect prompt injection attacks because they are not designed to monitor prompt content. And, while software supply chain scanners can detect insecure third-party code, most are not capable of validating model training data that is sourced externally (another type of resource that may exist within an organization’s AI supply chain).

Hence the importance of complementing conventional security tools and processes with novel solutions tailored for AI systems.

AI Risk Management Framework

Implementing a comprehensive risk management framework is essential for organizations looking to secure their AI systems and AI models against a constantly evolving threat landscape. A risk management framework provides a structured approach to identifying, assessing, and mitigating AI security risks, ensuring that sensitive data and critical assets are protected throughout the entire AI lifecycle.

The framework typically begins with a thorough risk assessment, where organizations evaluate potential security risks associated with their AI systems, including vulnerabilities in training data, model architecture, and deployment environments. Once risks are identified, targeted risk mitigation strategies are developed and implemented to address these vulnerabilities – ranging from technical controls to process improvements.

Continuous monitoring is a cornerstone of an effective risk management framework. By regularly reviewing and updating security measures, organizations can quickly detect and respond to new threats, reducing the likelihood of data breaches and ensuring the ongoing integrity of their AI models. Adopting a risk management framework not only strengthens security posture but also helps organizations comply with regulatory requirements and industry standards for AI security.

NIST (a U.S. government agency that develops standards and best practices) offers a widely used AI risk management framework. A number of cybersecurity companies have also released risk management frameworks.

AI Security Governance

Strong AI security governance is the foundation for building and maintaining secure AI systems. Governance encompasses the policies, procedures, and standards that guide the secure development, deployment, and operation of AI models across the organization. By establishing clear roles and responsibilities, organizations can ensure that every stage of the AI lifecycle is managed with security in mind.

Effective AI governance involves defining and enforcing security policies that address the unique risks of artificial intelligence, from protecting sensitive data to preventing data breaches. It also requires ongoing compliance with regulatory requirements and industry best practices. Continuous monitoring and regular evaluation of AI systems are essential for identifying vulnerabilities and responding to emerging threats, ensuring that security controls remain effective as AI technologies evolve.

By prioritizing AI security governance, organizations can protect their AI models, maintain the trust of customers and stakeholders, and stay ahead of new and emerging threats in the rapidly changing AI landscape.

AI Security and Compliance

Ensuring AI security and compliance is a critical aspect of any organization’s overall security strategy. As AI systems and AI models handle increasingly sensitive data, organizations must implement robust access controls, encrypt confidential information, and protect AI models from unauthorized access to prevent data breaches and maintain a strong security posture.

Compliance with regulatory requirements, industry standards, and internal policies is essential for building trust with customers and stakeholders. This includes making AI systems transparent, explainable, and fair, as well as regularly auditing security measures to ensure ongoing protection of sensitive data. By embedding security and compliance into every stage of AI development and deployment, organizations can mitigate the risk of data breaches, safeguard critical assets, and demonstrate their commitment to responsible artificial intelligence.

Prioritizing AI security and compliance not only helps organizations avoid costly incidents but also positions them as leaders in the responsible and secure use of AI technologies.

Best practices for securing AI models and agents

No matter which AI risk management framework your business chooses to follow or which governance and compliance standards it must meet, the following best practices help ensure that AI applications and services remain secure.

Model threats

Threat modeling is the practice of simulating attacks against AI systems. For instance, a business might feed malicious prompts into a model as a way of assessing it vulnerability to prompt injection attacks. Modeling threats offers a way to identify and mitigate risks before threat actors exploit them in the wild.

Validate and filter input

Model input validation and filtering is a way of detecting and blocking malicious requests, such as ones designed to cause a prompt injection attack. It works by intercepting and inspecting requests before they reach models.

Validate and filter output

Model output can also be monitored and validated before users view it. Output filtering provides a means of blocking outputs that include sensitive data. Output filtering tools can be configured such that they determine whether a user should be able to view data based on the user’s role or identity.

Rate-limit requests

In the context of AI, rate-limiting means restricting how many requests users or agents can submit to a model in a given time period. It helps to mitigate model extraction attacks, prompt injection attempts, and other breaches that may involve submitting large volumes of requests to a model.

Secure data in transit

Protecting data as it flows across networks that connect AI agents and models is a core AI security best practice. The data should be encrypted to prevent unauthorized access. It’s also possible to filter data to remove sensitive information that shouldn’t be present on the network.

Isolate training from production

Keeping AI training data in a secure environment that is isolated from production reduces the risk of poisoning attacks. This is because data is more challenging for threat actors to access when it exists in a dedicated environment.

Secure training data

More generally, keeping training data secure is a key step for preventing poisoning attacks. Businesses can do this by implementing access controls that restrict who can view and modify training data. The systems that store training data could also be configured to be read-only, preventing manipulation of the data.

Secure AI supply chains

Because modern AI systems — even those built in-house — often rely on third-party code, training data, and other resources, it’s critical to monitor the origins of those resources and ensure they are not tampered with.

Securing AI with CyCognito

AI services introduce a new category of external exposure that most security teams are not yet inventorying.

CyCognito is a leading external attack surface management platform that continuously discovers and validates every internet-facing asset your organization exposes, including exposed MCP servers, AI endpoints, inference APIs, and agent infrastructure, starting from nothing more than your organization’s name.

- Discovers AI services, model APIs, and agent endpoints you didn’t know were externally reachable, without seeds, agents, or a prior inventory to work from

- Identifies exposed AI deployments, including shadow IT bypassed procurement and security review, closing the visibility gap before it becomes a breach

- Continuously validates whether discovered AI-adjacent assets are actually exploitable, so your team focuses on confirmed risk rather than theoretical severity

- Prioritizes findings using attacker reachability and business context, not severity scores alone, so AI exposure is triaged alongside the rest of your external attack surface

- Routes validated findings to the right owners and tracks remediation through to verified closure

Organizations using CyCognito typically find their attack surface is up to 20x larger than previously inventoried. In an environment where AI endpoints are being spun up faster than they are catalogued, that gap is where attackers look first.

If you want to see CyCognito in action, click here to schedule a 1:1 demo.