What Is LLM Cybersecurity?

LLM security protects Large Language Model (LLM) applications from specialized threats like prompt injection, data poisoning, and model theft throughout their lifecycle. It combines traditional cybersecurity with AI-specific safeguards to prevent unauthorized data access, insecure output handling, and training data manipulation.

The complexity of LLMs, combined with their open-ended input and output capabilities, makes them prone to unique cybersecurity risks not commonly found in traditional software. These risks can affect confidentiality, integrity, and availability in ways that traditional IT controls may not anticipate.

Best practices for securing LLM applications include:

- Rate limiting: Prevent abuse and DoS attacks by restricting input volume.

- Use “sandboxing”: Execute generated code or applications in isolated environments.

- Adopt specialized tools: Utilize AI security tools for monitoring and defense.

- Input sanitization & filtering: Sanitize user prompts to strip out malicious instructions.

- Output validation: Treat LLM output as untrusted and analyze it for malicious content before execution.

Why Is Security So Important in LLM Usage? Top 4 Concerns for Organizations

1. Data Leakage

Data breaches are a significant concern for organizations using Large Language Models. When sensitive information, such as proprietary business data, personal identifiers, or confidential conversations, is used to prompt or fine-tune an LLM, there is a risk that this information could be exposed or retrieved by unauthorized parties. Attackers may exploit vulnerabilities in the LLM or its integration points to extract this data, especially if the system lacks robust access controls or logging.

In addition, LLMs that interact with external users can unintentionally echo sensitive information through their outputs, creating privacy and compliance risks. The inadvertent disclosure of sensitive data not only involves legal and financial consequences but can also erode trust with end-users and business partners, making preventive security controls essential at every stage of LLM deployment.

Learn more in our detailed guide data leak prevention

2. Model Exploitation

Model exploitation involves manipulating an LLM to generate unintended or harmful outputs, typically by abusing its generative properties or by crafting special input prompts. This can range from forcing the model to reveal information it should not have access to, producing biased or offensive content, or providing technical advice that could be used maliciously. Attackers may use novel techniques, such as adversarial prompts, to bypass filtering mechanisms or restrictions.

Such exploitation can have serious downstream effects. For instance, if an LLM is integrated into customer service, attackers could prompt it to leak sensitive logic, credentials, or policy information. In high-stakes environments like healthcare or finance, the consequences of malicious model exploitation can be severe, affecting safety, privacy, and regulatory compliance.

3. Misinformation

LLMs are capable of generating coherent, believable text, which makes them a vector for propagating misinformation. Attackers or careless users can leverage Large Language Models to create and spread large volumes of misleading content, including fake news, altered documents, or fraudulent messages. The high trust often placed in machine-generated text compounds the risk that such misinformation will mislead users or influence decisions.

Misinformation generated by LLMs can have organizational as well as societal impacts. Inaccurate answers or fabrications from an LLM integrated into critical workflows can disrupt operations or cause harm. When models are used to create content for public consumption, the risk of social engineering attacks or public misinformation rises sharply, highlighting the need for security controls and careful monitoring of model-generated outputs.

4. Ethical and Legal Risks

Deploying LLMs entails significant ethical and legal considerations. Models trained on unfiltered or proprietary data can inadvertently produce content that violates copyright, privacy, or employment laws. For example, outputs may include verbatim passages from copyrighted texts, or reveal personally identifiable information, leading to violations of data protection regulations such as GDPR or HIPAA.

From an ethical standpoint, the decisions about what LLM outputs are permitted, filtered, or reported can raise questions about transparency, bias, and accountability. Developers and organizations must evaluate how their models handle sensitive subjects, marginalized groups, and potentially harmful or illegal requests. A robust LLM cybersecurity strategy, therefore, must encompass legal compliance, ethical guidelines, and transparent operational policies.

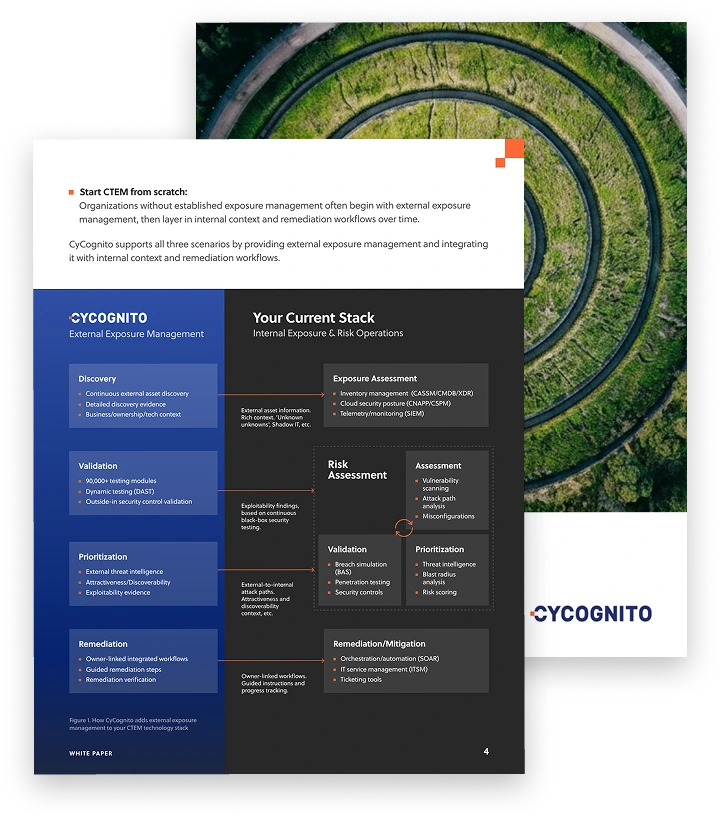

Operationalizing CTEM Through External Exposure Management

CTEM breaks when it turns into vulnerability chasing. Too many issues, weak proof, and constant escalation…

This whitepaper offers a practical starting point for operationalizing CTEM, covering what to measure, where to start, and what “good” looks like across the core steps.

OWASP Top 10 LLM Risks

The Open Web Application Security Project (OWASP) has compiled a definitive list of risks facing LLMs and applications that use them.

1. Prompt Injection

Prompt injection occurs when user inputs manipulate an LLM’s behavior in unintended ways, often bypassing safety mechanisms or altering output formatting. These attacks can be direct (embedded in a prompt) or indirect (embedded in content retrieved by the model, for example, from websites or documents). Both types can lead to outcomes like sensitive data leakage, command execution, or decision manipulation.

Prompt injection is particularly difficult to defend against due to the interpretive nature of Large Language Models (LLMs). Even retrieval-augmented generation (RAG) and fine-tuning offer limited protection. Attackers can also employ techniques such as adversarial suffixes, multilingual obfuscation, or multimodal inputs (e.g., hiding commands in images).

Mitigations: Constraining model behavior through strict prompt design, output validation, semantic filters, user access controls, and human-in-the-loop checks. Adversarial testing is also essential to simulate and prepare for real-world exploitation scenarios.

2. Sensitive Information Disclosure

Large Language Models (LLMs) can unintentionally reveal sensitive data such as PII, credentials, or proprietary algorithms through their outputs. This can happen when training data includes confidential material or when system prompts and queries are poorly sanitized. Common vulnerabilities include inadvertent output of personal or corporate information and model inversion attacks.

Mitigations: Enforcing access controls, applying differential privacy, filtering inputs and outputs, and avoiding reliance on the model to enforce security boundaries. User education and transparency in data handling policies also reduce the risk of unintended disclosures.

3. Supply Chain Risks

LLM applications rely heavily on third-party data, models, and tools. This introduces risks such as poisoned datasets, compromised pre-trained models, and vulnerable extensions. Open-access repositories and collaborative model environments (e.g., Hugging Face) are common vectors for such risks.

Risks also include the use of outdated components, insecure LoRA adapters, and unclear licensing terms. Attacks may involve direct tampering, Trojaned models, or repackaged mobile apps.

Mitigations: Using trusted suppliers, code and model signing, AI-specific SBOMs, red teaming, and robust patching processes.

4. Data and Model Poisoning

Poisoning attacks involve malicious or flawed data introduced during pretraining, fine-tuning, or embedding. This may degrade model performance or insert backdoors that activate under specific inputs.

Common tactics include hidden prompts in documents, adversarial examples, or prompt injection during inference. Poisoned data can cause misinformation, harmful outputs, or biased behaviors.

Mitigation and prevention: Data source verification, sandboxing, data version control, and monitoring for anomalies or behavioral drift during model training and inference.

5. Improper Output Handling

LLM-generated content can lead to vulnerabilities when outputs are passed to other systems without validation or sanitization. Risks include XSS, SQL injection, remote code execution, and SSRF. This is especially dangerous when models interface with privileged systems or extensions.

Mitigation: Encoding outputs by context (e.g., HTML, SQL), using parameterized queries, setting content security policies, and monitoring output patterns. Treating the LLM as an untrusted user is key to safe integration.

6. Excessive Agency

Large Language Models (LLMs) integrated with plugins or tools may be given too much functional power, such as executing shell commands or modifying databases. If these extensions are over-permissive or not tightly scoped, attackers may coerce the LLM into triggering harmful actions.

Common causes include extensions with excessive functionality, excessive permissions, or autonomy without human oversight.

Mitigations: Limiting extensions, enforcing least privilege, user context execution, and requiring manual approval for critical actions.

Tips from the Expert

Rob Gurzeev, CEO and Co-Founder of CyCognito, has led the development of offensive security solutions for both the private sector and intelligence agencies.

In my experience, here are tips that can help you better secure LLM applications across the full lifecycle (from prompt assembly to downstream actions):

- Context firewall for prompt assembly: Build a “context compiler” that tags every chunk (source, owner, sensitivity, TTL) and enforces hard rules (e.g., “no secrets in system/developer prompts”, “PII only if user has purpose + entitlement”) before anything reaches the model.

- Retrieval integrity, not just retrieval relevance: Sign or hash RAG documents/chunks at ingestion and verify at query time; store provenance (URL/repo commit, ingestion pipeline ID) so poisoned or swapped content is detectable and rollbackable.

- Separate policy decisions from the model with a deterministic gate: Use a non-LLM policy engine (OPA/Cedar/etc.) to approve/deny tool use, data classes, and high-risk intents; the model can propose actions, but it should never be the final authority.

- Treat tool outputs as hostile inputs to the next step: Normalize and sanitize tool outputs before they are re-fed into the model (strip hidden instructions, enforce schema, remove executable snippets), since indirect injection often enters via “trusted” tools.

- Build “security regression tests” into CI for prompts and agents: Maintain a curated set of jailbreak/prompt-injection fixtures and golden expectations; fail the build if a prompt/template/tool description change reintroduces a previously fixed weakness.

7. System Prompt Leakage

System prompts may contain sensitive logic, configurations, or even credentials. If leaked through prompt injection or model responses, they can be used to craft further attacks or bypass restrictions.

Leakage risks include revealing business logic, role-based access rules, or content filtering mechanisms.

Mitigation and prevention: Keeping prompts free of sensitive data, externalizing control logic, and using deterministic guardrails outside of the LLM. Effective privilege separation must be enforced outside the model layer.

8. Vector and Embedding Weaknesses

In RAG systems, vector stores and embedding logic introduce risks such as embedding inversion (revealing original data), cross-tenant data leakage, and poisoned vector content. Improper access control can expose private or licensed content.

Mitigations: Permission-aware vector stores, authenticated data sources, classification-based access control, and logging. When combining data from different sources, consistency and compatibility checks are essential to avoid conflict or unintended leakage.

9. Misinformation and Harmful Content

Large Language Models may generate believable but false information (hallucinations), especially when fine-tuned on incomplete or biased data. Overreliance on model outputs without verification can lead to bad decisions, reputational harm, or legal consequences.

Mitigations: implementing RAG to ground outputs in reliable sources, enforcing human oversight, adding output validation layers, and educating users on risks. Clear risk communication and secure code suggestions also help mitigate downstream damage.

10. Unbounded Consumption

LLM inference is resource-intensive. Without controls, attackers can exploit this to cause denial of service, inflate costs (denial of wallet), or extract model behavior. Model replication through API queries is another threat.

Mitigation: Rate limiting, input validation, resource quotas, timeout mechanisms, watermarking to detect output reuse, and strong access controls. Systems should also implement anomaly detection, sandboxing, and restrict access to logit-related metadata to prevent extraction and misuse.

Best Practices for Securing LLMs

Here are some common LLM security best practices that can help organizations protect LLM applications and ensure safe usage.

1. Input Sanitization and Filtering

Input sanitization and filtering are critical for reducing the risk of injection attacks and minimizing malicious input reaching LLMs. All logins, queries, prompts, or API payloads sent to a model should be checked for harmful content, such as embedded commands, code snippets, or toxic language. Input sanitization uses pattern matching, allowlisting, and normalization techniques to clean and validate user inputs, significantly lowering the risk posed by adversarial or malformed queries.

Consistent application of filtering alongside access controls also helps prevent sensitive or inappropriate language and instructions from reaching the model, lowering risks of abuse, prompt injection, and output manipulation. Combining regular expressions, keyword checking, and context-aware filters is far more effective than relying on basic text cleansing alone. These controls should be updated as threats evolve.

2. Output Validation

LLM-generated outputs should always be validated before further processing or display to users. Output validation ensures that malicious, sensitive, or policy-violating content does not propagate beyond the model’s boundaries. Automated validation of model outputs can include syntax checking, semantic consistency analysis, or cross-referencing against allowlists or blocklists, depending on the application’s requirements.

When Large Language Models are integrated into workflows where model outputs could trigger further actions, including code execution, financial transactions, or system updates, validation becomes even more important. Deployers should establish post-processing stages to intercept and review suspicious outputs, combining automated checks with manual sampling for particularly high-impact cases.

3. Rate Limiting

Implementing rate limiting is essential for controlling access to LLM-powered endpoints and preventing abuse, such as denial-of-service attacks or excessive consumption of resources. Each user, IP address, or API key should have well-defined quotas, with mechanisms in place to detect and throttle bursts of activity. Effective rate limiting reduces exposure to high-frequency attacks and helps maintain service availability for legitimate users.

Rate limits should be adjusted based on resource demands, organizational risk appetite, and user access patterns. Implementing layered rate limiting—at application, API, and backend infrastructure levels—provides resilience against bypass attempts and distributed attack strategies targeting LLM-based services.

4. Use Sandboxing

Sandboxing isolates LLM execution environments from critical systems, reducing the risk that compromised or misbehaving models cause broader damage. By running Large Language Models in controlled, restricted environments, organizations can prevent untrusted code execution, limit file system access, and monitor for suspicious activity in real time. Sandboxing also aids in rapid containment if an LLM begins producing malicious outputs or acting outside normal policy bounds.

Adopting sandboxing is especially important for LLMs tasked with code generation, automation, or decision-making roles in sensitive domains. It limits the impact of prompt injection attacks and data breaches. Combining virtualization, process isolation, and resource control minimizes attack impact, providing an extra security layer without compromising LLM utility or responsiveness.

5. Adopt Specialized Security Tools

Securing LLMs effectively requires using specialized security tools designed for AI and ML environments. These may include adversarial prompt detectors, LLM-aware input/output firewalls, privacy-preserving analytics platforms, or automated red-teaming solutions. Off-the-shelf security software is often inadequate for the unique threats presented by Large Language Models, making tool selection a critical part of any cybersecurity strategy.

Integration of specialized tools should be matched to organizational needs and risk exposure, with regular reviews to ensure tools evolve alongside emerging threats. Organizations should also contribute to and draw from the growing open-source ecosystem of LLM and AI-specific security resources to increase resiliency and collective defense.

6. Continuous Monitoring and Logging

Continuous monitoring and comprehensive logging enable organizations to detect suspicious activity, diagnose incidents, and comply with regulatory requirements. Detailed logs should capture all prompt submissions, LLM responses, access events, and system interactions, providing an audit trail for forensic analysis if an incident occurs. Monitoring should extend to performance metrics, anomaly detection, and outputs flagged for further investigation.

Real-time alerting based on behavioral patterns—such as repeated output of sensitive data, spikes in API usage, or anomalous model responses—can accelerate incident response and limit the blast radius of successful attacks like data breaches. Organizations should ensure that monitoring infrastructure is itself secured and subject to regular review to preserve data integrity and confidentiality.

7. Adversarial Testing and Red Teaming

Adversarial testing and red-teaming are proactive strategies for identifying vulnerabilities before attackers do. Security teams, internal experts, or third-party specialists systematically probe LLM deployments with crafted prompts, attack techniques, and edge cases, seeking to bypass guardrails or induce failure modes. This rigorous approach uncovers weaknesses that traditional QA or user acceptance testing may not reveal.

Insights from red-teaming efforts inform long-term resilience efforts, from refining filters to retraining models and hardening infrastructure. Periodic adversarial testing, ideally at each major deployment or system upgrade, ensures that LLM cybersecurity keeps pace with the rapidly evolving threat landscape and the arms race between defenders and attackers.

LLM Security with CyCognito

LLM applications introduce new risks and new operational complexity. Prompt injection is one example, but the broader shift is that teams are deploying LLM-enabled services and integration layers quickly, and new externally reachable entry points can appear outside normal review cycles.

LLM security controls and reviews are often scoped and periodic. That approach is misaligned with LLM environments that change continuously (new chat experiences, new API endpoints, new connectors, new routes to data and downstream tools).

CyCognito complements LLM security by adding continuous external discovery and monitoring for LLM-related entry points. If you already use AppSec tooling, vulnerability scanners, cloud security platforms, or periodic assessments, CyCognito strengthens your program by:

- Continuously discovering externally reachable LLM entry points (public chat experiences, LLM API endpoints, RAG-backed apps, and integration services), including unmanaged and newly deployed services

- Maintaining an up-to-date external asset inventory as services and configurations change

- Providing reachability context so teams can understand what is exposed and where it is reachable from

- Supporting prioritization by tying entry points to ownership and asset criticality, so the right team can take action faster

By shifting from periodic identification and coverage gaps to continuous visibility into externally reachable LLM entry points, CyCognito helps LLM security programs stay current as AI infrastructure changes.