What Is Shadow AI?

Shadow AI refers to the unauthorized, unmonitored use of generative AI tools and applications by employees for work purposes, bypassing formal IT security and governance. This typically happens when users deploy or access AI-powered services, often based on large language models (LLMs) like ChatGPT, Claud, or Gemini, knowingly or unknowingly deviating from company guidelines and security protocols.

Shadow AI often arises from the need for agility and innovation, as employees seek effective solutions outside the slower, more regulated channels provided by their employers. The growth of accessible AI services has made it easy for non-technical workers to adopt these tools without oversight. This phenomenon is an extension of shadow IT, where staff circumvent official IT processes by using unauthorized devices or software.

This is part of a series of articles about AI security

What Are the Risks of Shadow AI?

The presence of shadow AI tools is associated with various risks.

Data Breaches and Security Vulnerabilities

The unauthorized use of AI tools outside approved organizational controls exposes sensitive information to heightened risks. Employees may upload proprietary data, client information, or regulated records into third-party AI platforms, often without understanding the risks to confidentiality. These platforms might not meet the security standards or data handling policies required by the organization, creating gaps in protection. If the third-party provider experiences a breach or if the data is improperly handled, confidential material could become exposed or stolen.

Additionally, shadow AI services could become entry points for cyber attackers. Many AI tools integrate with internal systems using API keys or tokens. If these credentials are shared insecurely or stored improperly, attackers could exploit them to access corporate resources. IT teams are often unaware of these unofficial integrations, which means vulnerabilities may go undetected and unpatched, compounding the risk of large-scale compromise.

Learn more in our detailed guide to vulnerability management

Noncompliance With Regulations

Organizations face strict legal requirements concerning data privacy, such as GDPR, HIPAA, or industry-specific frameworks. When employees use unapproved AI tools, they often bypass controls intended to enforce regulatory compliance. Sensitive data entered into these platforms may be sent to data centers in countries with weaker protections or lack legal transfer agreements. This can lead to unauthorized cross-border data transfers and noncompliance with data residency laws.

Auditing and documentation are fundamental to demonstrating regulatory compliance. With shadow AI tools, these processes break down since usage is undocumented and accountability unclear. Regulators investigating incidents will find that organizations cannot prove appropriate safeguards or track how sensitive information was handled. This situation increases the likelihood of fines, sanctions, or mandatory corrective actions.

Reputational Damage

The fallout from a breach or regulatory issue triggered by shadow AI usage extends far beyond legal penalties. Public exposure of mishandled sensitive information or a headline-grabbing data leak can seriously damage an organization’s reputation. Customers, partners, and investors may lose confidence in the company’s ability to manage data securely, potentially resulting in lost business opportunities and long-term revenue decline.

Negative publicity from misuse of AI tools, such as unintended data leaks, bias in AI-driven decisions, or associations with ethically questionable AI providers, can lead to critical scrutiny. Organizations may face backlash from the public, the media, and industry peers. The resulting reputational harm can take years to repair.

Shadow AI vs. Shadow IT

Shadow AI and shadow IT are related concepts but differ in important ways.

Shadow IT broadly refers to the use of unauthorized hardware, software, or services within an organization: Anything that sidesteps IT’s formal approval and oversight. Traditionally, this included using personal devices, free cloud storage, or unapproved applications for work. The primary risks revolved around unmanaged data, inconsistent security, and compliance gaps.

Shadow AI centers specifically on artificial intelligence, including tools, AI models, or services that analyze data or generate content without organizational control. While shadow AI falls under the larger umbrella of shadow IT, its risks are amplified by the highly sensitive nature of data ingested into AI platforms, the rapid adoption of generative AI, and the difficulty of auditing black-box algorithms. Managing shadow AI tools requires a more tailored approach, as traditional shadow IT controls may not adequately address the novel challenges posed by AI usage.

Tips from the Expert

Rob Gurzeev, CEO and Co-Founder of CyCognito, has led the development of offensive security solutions for both the private sector and intelligence agencies.

In my experience, here are tips that can help you better manage shadow AI risk without slowing the business to a crawl:

- Map AI exposure via identity trails, not just network logs: Mine IdP logs for OAuth app grants, “Sign in with Google/Microsoft” consent, and token refresh patterns to find shadow AI tools even when traffic is encrypted or routed through personal devices.

- Build an “AI allowlist” at the egress layer with protocol fingerprinting: Use SNI/JA3/JA4-style fingerprints (plus known CDN patterns) to spot AI SaaS endpoints that don’t show up cleanly as domains, then enforce allow/deny and logging at the secure web gateway.

- Detect high-risk copy/paste flows with lightweight DLP triggers: Focus DLP on exfil paths typical for shadow AI (clipboard → browser, file upload fields, chat textareas) and only on sensitive classifiers—this catches the real leaks without blanket surveillance.

- Create sanctioned “prompt wrappers” that auto-redact and label data: Provide an internal prompt portal or browser extension that strips secrets/PII, applies data labels, and injects usage policy headers—employees keep speed, security keeps control.

- Govern AI through API keys and spend telemetry: Inventory and alert on new OpenAI/Anthropic/Gemini keys in code repos, CI variables, and endpoint configs; correlate with finance/expense data to uncover paid shadow usage that bypasses IT.

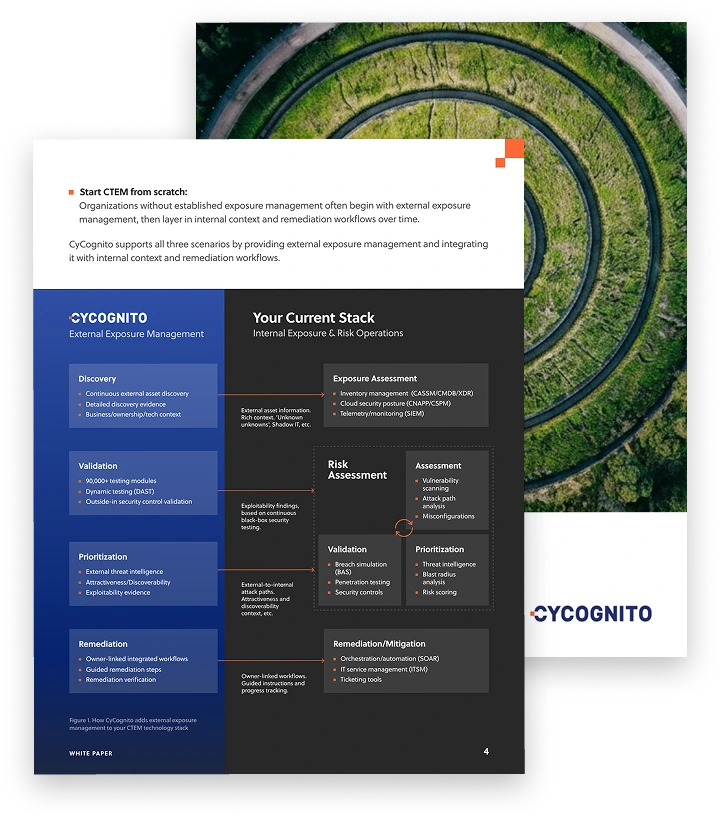

Operationalizing CTEM Through External Exposure Management

CTEM breaks when it turns into vulnerability chasing. Too many issues, weak proof, and constant escalation…

This whitepaper offers a practical starting point for operationalizing CTEM, covering what to measure, where to start, and what “good” looks like across the core steps.

Key Causes of Shadow AI

Shadow AI doesn’t emerge in a vacuum—it often results from specific organizational gaps and user motivations. Understanding these root causes is essential for building effective governance strategies. Below are the primary factors that contribute to the rise of shadow AI:

- Lack of clear AI policies: Many organizations have not yet developed formal policies that define acceptable AI usage. In the absence of clear guidance, employees turn to external tools they believe will help them be more productive or creative, often unaware of the associated risks.

- Slow adoption of official AI tools: When sanctioned AI solutions are delayed due to lengthy procurement, evaluation, or integration cycles, users may opt for freely available or faster-to-access alternatives. This disconnect between user needs and IT readiness drives shadow AI adoption.

- Ease of access to public AI services: Cloud-based AI tools, such as ChatGPT, Midjourney, or Google Bard, are easily accessible, often free or low-cost, and require no installation or approval. This convenience lowers the barrier to entry and encourages independent use without organizational oversight.

- Pressure to innovate and improve efficiency: Teams are under constant pressure to do more with less. AI tools promise increased productivity, automation, and insights, making them attractive shortcuts for employees seeking faster results.

- Lack of awareness about security and compliance: Non-technical employees often do not understand how AI tools handle data, nor are they fully aware of compliance obligations. As a result, they may unknowingly expose sensitive information or violate data governance requirements.

- Cultural gaps between IT and business units: When IT is perceived as a gatekeeper rather than a collaborator, business units may work around formal processes. A lack of communication and alignment on technology goals can lead departments to adopt AI independently.

Understanding these drivers helps organizations proactively address shadow AI by closing policy gaps, improving internal education, and aligning AI deployment with user needs.

Common Examples of Shadow AI Usage

Generative AI Chatbots

Generative AI chatbots, such as OpenAI’s ChatGPT or Google Gemini, are one of the most common forms of shadow AI in the workplace. Employees often use them to draft emails, rewrite documents, summarize reports, or generate code without notifying IT or security teams.

Because these tools are cloud-based and easy to access through a browser or mobile device, they can be adopted informally across departments. The main concern is that employees may paste sensitive company, customer, or partner information into these systems without understanding how the data is processed, stored, or reused.

Examples:

- A sales manager copies a full client contract into a chatbot and asks it to summarize key terms and risks.

- A recruiter uploads a spreadsheet of candidate resumes and asks the chatbot to rank applicants based on job requirements.

- An operations employee pastes internal pricing data into an AI tool to generate a competitor comparison report.

- A project lead shares meeting notes containing confidential roadmap details and asks the chatbot to create a status update email.

Developers Using Unauthorized AI Coding Assistants

Developers often adopt AI coding assistants such as GitHub Copilot or Amazon CodeWhisperer to speed up coding, debugging, and documentation. In many cases, these tools are enabled without formal approval, especially when developers install browser plugins or IDE extensions on their own.

This creates a shadow AI risk because proprietary source code, internal APIs, and system logic may be transmitted to an external AI provider. Unauthorized AI tools also bypass internal controls such as secure development policies, code review standards, and approved dependency management processes.

Examples:

- A backend developer uses an unauthorized AI assistant to rewrite authentication logic and unknowingly introduces a security flaw.

- A developer pastes proprietary algorithm code into a chatbot and asks for performance optimization suggestions.

- A contractor enables an AI coding extension in their IDE and uses it while working on internal infrastructure scripts.

- A software engineer asks an AI tool to generate Terraform templates and accidentally deploys overly permissive cloud access rules.

ML Models for Data Analysis

Employees in departments such as marketing, finance, or research sometimes build machine learning models outside approved environments to analyze data more quickly. Instead of using a managed analytics platform, they may run AI models in public cloud notebooks, open-source frameworks, or personal laptops.

These shadow ML workflows often involve sensitive customer data, internal business metrics, or proprietary datasets without proper access controls. Because the model training process happens outside centralized oversight, the organization may lose visibility into how data is handled, stored, and shared.

Examples:

- A marketing analyst uploads customer purchase history into an unapproved cloud notebook to build a churn prediction model.

- A finance employee downloads transaction data to a personal laptop and runs a machine learning model to detect fraud patterns.

- A product team trains a recommendation model using user behavior logs stored in an unsecured external storage bucket.

- A research analyst builds a model using internal performance metrics but fails to document the dataset version or training process, making results impossible to reproduce.

How to Manage the Risks of Shadow AI

1. Structured Access Governance

Implementing structured access governance is crucial for controlling which users can deploy, access, or interact with AI tools. Organizations should create formal approval chains and access controls to ensure only authorized personnel utilize AI platforms, with permissions based on roles and business needs. Centralized tracking of AI tool usage reduces the likelihood of unauthorized access and helps identify potential instances of shadow AI before they escalate into major incidents.

Regular review and enforcement of these governance controls is necessary as workloads and technology evolve. By integrating identity management and access controls into AI-related workflows, organizations can maintain oversight over AI use and data security. Coupling such measures with periodic audits and automated alerts helps IT teams respond quickly to deviations, ensuring AI usage remains within the defined security and compliance boundaries.

2. Risk-Based Classification

Risk-based classification involves categorizing AI applications according to the sensitivity of the data they process, their integration depth with internal systems, and their potential impact on business operations. By establishing a framework that differentiates between low-risk and high-risk AI activities, organizations can allocate monitoring and controls proportionately. High-risk applications, such as those handling customer data or critical infrastructure, should face stricter checks, data loss prevention mechanisms, and real-time auditing requirements.

This targeted approach allows resource optimization, as not all AI use cases warrant the same level of scrutiny. Employees are less likely to circumvent controls when guidance is clear and proportional, helping balance productivity and risk. Ongoing assessment is needed to capture new threats or changes in how AI tools are used, so risk classifications remain relevant and actionable.

3. Continuous Monitoring and Discovery

Continuous monitoring and discovery tools scan the organization’s environment for unauthorized AI usage, uncovering shadow deployments as they emerge. Network monitoring, data flow analysis, and AI-specific detection platforms can reveal telltale signs of unsanctioned data transfers or connections to external AI services. This enables IT and security teams to react in near real time to policy violations or emerging vulnerabilities, minimizing potential exposure.

Beyond detection, continuous monitoring provides valuable insights for trending analysis and incident response. Over time, data gathered through these tools helps identify usage patterns across departments, highlighting where shadow AI is most prevalent. This allows leadership to adjust security policy, employee engagement, and resource allocation to proactively counter shadow AI risks.

4. Lifecycle and Intake Processes

Establishing robust intake and lifecycle processes for AI tools is fundamental to managing risk from adoption through decommissioning. Every request to use or integrate an AI system should follow a standardized intake workflow that evaluates security, privacy, and compliance impacts. Clear guidelines for updating, upgrading, or retiring AI tools ensure that vulnerabilities do not persist as technology or organizational requirements change.

Lifecycle management extends beyond deployment to include regular reassessment and decommissioning of outdated or noncompliant AI systems. This discipline prevents abandoned projects or “zombie” models from lingering within the environment, where they could be exploited. Documenting each AI tool’s status and history supports effective oversight and simplifies compliance audits.

5. Employee Education and Awareness

Education and awareness campaigns are essential in reducing shadow AI risks at their source. Employees must understand why certain AI tools are approved, the risks of using unauthorized AI tools, and how to recognize and avoid noncompliant behavior. Regular training sessions, targeted awareness campaigns, and clear communication help build a culture of shared responsibility around technology use and data protection.

Effective education also empowers employees to participate in risk reduction. When staff know how to report suspected shadow AI tools or request sanctioned alternatives, they are less likely to go “under the radar.” Ongoing dialogue between IT, security, and business units is critical to adapt messaging as threats, user behavior, and the AI landscape evolve. This two-way communication helps organizations stay ahead of unauthorized adoption while maintaining productivity.

Governing Shadow AI with CyCognito

Shadow AI introduces new risks and new operational complexity. Employees can adopt AI tools, plugins, and integration services quickly, and new externally reachable entry points can appear outside normal review cycles.

Shadow AI controls and reviews are often scoped and periodic. That approach is misaligned with environments that change continuously (new AI SaaS accounts, new endpoints, new connectors, new routes to data and internal tools).

CyCognito complements shadow AI governance by adding continuous external discovery and monitoring for AI-related entry points. If you already use AppSec tooling, vulnerability scanners, cloud security platforms, or periodic assessments, CyCognito strengthens your program by:

- Continuously discovering externally reachable AI entry points and integration services, including unmanaged and newly deployed services

- Maintaining an up-to-date external asset inventory as services and configurations change

- Providing reachability context so teams can understand what is exposed and where it is reachable from

- Supporting prioritization by tying entry points to ownership and asset criticality, so the right team can take action faster

By shifting from periodic identification and coverage gaps to continuous visibility into externally reachable AI entry points, CyCognito helps shadow AI programs stay current as AI usage and infrastructure changes.